25:

468:

936:. Higher-degree polynomials would work in theory, but yield models that are not really in the spirit of LOESS. LOESS is based on the ideas that any function can be well approximated in a small neighborhood by a low-order polynomial and that simple models can be fit to data easily. High-degree polynomials would tend to overfit the data in each subset and are numerically unstable, making accurate computations difficult.

2699:, on the other hand, it is only necessary to write down a functional form in order to provide estimates of the unknown parameters and the estimated uncertainty. Depending on the application, this could be either a major or a minor drawback to using LOESS. In particular, the simple form of LOESS can not be used for mechanistic modelling where fitted parameters specify particular physical properties of a system.

2678:

addition, LOESS is very flexible, making it ideal for modeling complex processes for which no theoretical models exist. These two advantages, combined with the simplicity of the method, make LOESS one of the most attractive of the modern regression methods for applications that fit the general framework of least squares regression but which have a complex deterministic structure.

614:. It does this by fitting simple models to localized subsets of the data to build up a function that describes the deterministic part of the variation in the data, point by point. In fact, one of the chief attractions of this method is that the data analyst is not required to specify a global function of any form to fit a model to the data, only to fit segments of the data.

684:, giving more weight to points near the point whose response is being estimated and less weight to points further away. The value of the regression function for the point is then obtained by evaluating the local polynomial using the explanatory variable values for that data point. The LOESS fit is complete after regression function values have been computed for each of the

82:

3151:

3331:

945:

be related to each other in a simple way than points that are further apart. Following this logic, points that are likely to follow the local model best influence the local model parameter estimates the most. Points that are less likely to actually conform to the local model have less influence on the local model

944:

As mentioned above, the weight function gives the most weight to the data points nearest the point of estimation and the least weight to the data points that are furthest away. The use of the weights is based on the idea that points near each other in the explanatory variable space are more likely to

617:

The trade-off for these features is increased computation. Because it is so computationally intensive, LOESS would have been practically impossible to use in the era when least squares regression was being developed. Most other modern methods for process modeling are similar to LOESS in this respect.

2694:

Another disadvantage of LOESS is the fact that it does not produce a regression function that is easily represented by a mathematical formula. This can make it difficult to transfer the results of an analysis to other people. In order to transfer the regression function to another person, they would

2690:

LOESS makes less efficient use of data than other least squares methods. It requires fairly large, densely sampled data sets in order to produce good models. This is because LOESS relies on the local data structure when performing the local fitting. Thus, LOESS provides less complex data analysis in

1043:

However, any other weight function that satisfies the properties listed in

Cleveland (1979) could also be used. The weight for a specific point in any localized subset of data is obtained by evaluating the weight function at the distance between that point and the point of estimation, after scaling

2681:

Although it is less obvious than for some of the other methods related to linear least squares regression, LOESS also accrues most of the benefits typically shared by those procedures. The most important of those is the theory for computing uncertainties for prediction and calibration. Many other

2677:

As discussed above, the biggest advantage LOESS has over many other methods is the process of fitting a model to the sample data does not begin with the specification of a function. Instead the analyst only has to provide a smoothing parameter value and the degree of the local polynomial. In

717:

of data used for each weighted least squares fit in LOESS are determined by a nearest neighbors algorithm. A user-specified input to the procedure called the "bandwidth" or "smoothing parameter" determines how much of the data is used to fit each local polynomial. The smoothing parameter,

1467:

931:

The local polynomials fit to each subset of the data are almost always of first or second degree; that is, either locally linear (in the straight line sense) or locally quadratic. Using a zero degree polynomial turns LOESS into a weighted

1801:

922:

is, the closer the regression function will conform to the data. Using too small a value of the smoothing parameter is not desirable, however, since the regression function will eventually start to capture the random error in the data.

610:. They address situations in which the classical procedures do not perform well or cannot be effectively applied without undue labor. LOESS combines much of the simplicity of linear least squares regression with the flexibility of

3335:

704:

data points. Many of the details of this method, such as the degree of the polynomial model and the weights, are flexible. The range of choices for each part of the method and typical defaults are briefly discussed next.

2666:

2521:

2082:

2290:

1298:

2354:

2393:

1287:

838:

618:

These methods have been consciously designed to use our current computational ability to the fullest possible advantage to achieve goals not easily achieved by traditional approaches.

1150:

1592:

1031:

1234:

1199:

1109:

2702:

Finally, as discussed above, LOESS is a computationally intensive method (with the exception of evenly spaced data, where the regression can then be phrased as a non-causal

1864:

1948:

1528:

3323:

1977:

2131:

1890:

2167:

858:

763:

1669:

920:

900:

880:

794:

736:

2560:

1674:

1080:

2986:

2908:

1828:

2187:

2105:

1910:

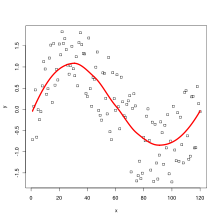

1636:

1616:

1490:

1170:

702:

3271:

3213:

2743:

765:

points (rounded to the next largest integer) whose explanatory variables' values are closest to the point at which the response is being estimated.

498:

2571:

3218:

408:

2401:

1985:

3261:

3129:

3033:

3173:

3016:

398:

46:

3303:

2195:

633:

criterion variable. When each smoothed value is given by a weighted linear least squares regression over the span, this is known as a

3353:

3199:

576:

68:

2706:

filter). LOESS is also prone to the effects of outliers in the data set, like other least squares methods. There is an iterative,

625:, particularly when each smoothed value is given by a weighted quadratic least squares regression over the span of values of the

362:

3228:

2723:

413:

351:

171:

146:

2856:

2837:

742:

of data points that are used in each local fit. The subset of data used in each weighted least squares fit thus comprises the

273:

3076:

Garimella, Rao

Veerabhadra (22 June 2017). "A Simple Introduction to Moving Least Squares and Local Regression Estimation".

3121:

Regression

Modeling Strategies: With Applications to Linear Models, Logistic and Ordinal Regression, and Survival Analysis

232:

1040:

is the distance of a given data point from the point on the curve being fitted, scaled to lie in the range from 0 to 1.

882:

is called the smoothing parameter because it controls the flexibility of the LOESS regression function. Large values of

589:

491:

1462:{\displaystyle \operatorname {RSS} _{x}(A)=\sum _{i=1}^{N}(y_{i}-A{\hat {x}}_{i})^{T}w_{i}(x)(y_{i}-A{\hat {x}}_{i}).}

434:

3181:

3177:

3161:

39:

33:

403:

372:

299:

2753:

2299:

650:

596:

2748:

2359:

585:

393:

382:

346:

253:

1239:

1044:

the distance so that the maximum absolute distance over all of the points in the subset of data is exactly one.

454:

50:

3251:

3242:

799:

657:

rediscovered the method in 1979 and gave it a distinct name. The method was further developed by

Cleveland and

607:

325:

248:

141:

120:

1114:

2703:

484:

377:

902:

produce the smoothest functions that wiggle the least in response to fluctuations in the data. The smaller

1533:

965:

949:

681:

341:

336:

278:

1618:

is a metric, it is a symmetric, positive-definite matrix and, as such, there is another symmetric matrix

3233:

3097:

3055:

2682:

tests and procedures used for validation of least squares models can also be extended to LOESS models .

1204:

611:

531:

527:

429:

125:

3256:

3247:

467:

3238:

3050:. Laboratory for Computational Statistics. LCS Technical Report 5, SLAC PUB-3466. Stanford University.

1175:

1085:

2977:

2945:

2903:

2758:

2733:

2696:

673:

654:

603:

449:

439:

320:

288:

243:

222:

130:

1833:

649:

In 1964, Savitsky and Golay proposed a method equivalent to LOESS, which is commonly referred to as

1915:

1495:

956:

367:

268:

263:

217:

166:

156:

101:

3289:

3223:

2881:

3044:

3003:

2965:

2925:

621:

A smooth curve through a set of data points obtained with this statistical technique is called a

472:

201:

186:

1953:

2110:

1869:

1796:{\displaystyle y^{T}wy=(hy)^{T}(hy)=\operatorname {Tr} (hyy^{T}h)=\operatorname {Tr} (wyy^{T})}

3125:

3085:

3029:

2948:(1981). "LOWESS: A program for smoothing scatterplots by robust locally weighted regression".

2728:

2707:

2563:

2136:

843:

745:

677:

258:

161:

115:

3308:

3119:

1641:

905:

885:

865:

779:

721:

3077:

2995:

2984:(1988). "Locally-Weighted Regression: An Approach to Regression Analysis by Local Fitting".

2957:

2917:

2819:

2530:

1050:

553:

212:

2937:

1806:

3110:

3068:

2981:

2933:

658:

444:

151:

2738:

2172:

2090:

1895:

1621:

1601:

1475:

1155:

933:

687:

523:

196:

3347:

315:

191:

1047:

Consider the following generalisation of the linear regression model with a metric

181:

3023:

1172:

input parameters and that, as customary in these cases, we embed the input space

630:

227:

176:

669:

595:-based meta-model. In some fields, LOESS is known and commonly referred to as

89:

with uniform noise added. The LOESS curve approximates the original sine wave.

2661:{\displaystyle w(x,z)=\exp \left(-{\frac {\|x-z\|^{2}}{2\alpha ^{2}}}\right)}

3298:

3272:

Nate Silver, How

Opinion on Same-Sex Marriage Is Changing, and What It Means

946:

86:

3294:

3219:

Smoothing by Local

Regression: Principles and Methods (PostScript Document)

2714:, but too many extreme outliers can still overcome even the robust method.

2516:{\displaystyle A(x)=YW(x){\hat {X}}^{T}({\hat {X}}W(x){\hat {X}}^{T})^{-1}}

2077:{\displaystyle \operatorname {Tr} (W(x)(Y-A{\hat {X}})^{T}(Y-A{\hat {X}}))}

1671:. The above loss function can be rearranged into a trace by observing that

2906:(1979). "Robust Locally Weighted Regression and Smoothing Scatterplots".

2711:

2189:

and setting the result equal to 0 one finds the extremal matrix equation

665:

661:(1988). LOWESS is also known as locally weighted polynomial regression.

81:

3007:

2969:

2929:

3318:

3313:

3089:

3284:

3081:

2999:

2961:

2921:

3180:

external links, and converting useful links where appropriate into

2710:

version of LOESS that can be used to reduce LOESS' sensitivity to

80:

16:

Moving average and polynomial regression method for smoothing data

2285:{\displaystyle A{\hat {X}}W(x){\hat {X}}^{T}=YW(x){\hat {X}}^{T}}

1598:

enumerates input and output vectors from a training set. Since

3144:

18:

1979:

respectively, the above loss function can then be written as

2838:"scipy.signal.savgol_filter — SciPy v0.16.1 Reference Guide"

565:

562:

2724:

Degrees of freedom (statistics)#In non-standard regression

2695:

need the data set and software for LOESS calculations. In

776: + 1 points for a fit, the smoothing parameter

3339:

3169:

3164:

may not follow

Knowledge (XXG)'s policies or guidelines

955:

The traditional weight function used for LOESS is the

3224:

NIST Engineering

Statistics Handbook Section on LOESS

2574:

2533:

2404:

2362:

2302:

2198:

2175:

2139:

2113:

2093:

1988:

1956:

1918:

1898:

1872:

1836:

1809:

1677:

1644:

1624:

1604:

1536:

1498:

1478:

1301:

1242:

1207:

1178:

1158:

1117:

1088:

1053:

968:

908:

888:

868:

846:

802:

782:

748:

724:

690:

588:

methods that combine multiple regression models in a

577:

568:

2854:

Kristen Pavlik, US Environmental

Protection Agency,

559:

3257:

The supsmu function (Friedman's SuperSmoother) in R

680:is being estimated. The polynomial is fitted using

556:

530:. Its most common methods, initially developed for

2660:

2554:

2515:

2387:

2348:

2284:

2181:

2161:

2125:

2099:

2076:

1971:

1942:

1904:

1884:

1858:

1822:

1795:

1663:

1630:

1610:

1586:

1522:

1484:

1461:

1281:

1228:

1193:

1164:

1144:

1103:

1074:

1025:

914:

894:

874:

852:

832:

788:

757:

730:

696:

85:LOESS curve fitted to a population sampled from a

2886:NIST/SEMATECH e-Handbook of Statistical Methods,

1152:. Assume that the linear hypothesis is based on

2987:Journal of the American Statistical Association

2909:Journal of the American Statistical Association

3340:National Institute of Standards and Technology

2820:"Savitzky–Golay filtering – MATLAB sgolayfilt"

860:denoting the degree of the local polynomial.

492:

8:

3264:– A method to perform Local regression on a

2626:

2613:

2349:{\displaystyle {\hat {X}}W(x){\hat {X}}^{T}}

2782:

2388:{\displaystyle \operatorname {RSS} _{x}(A)}

3274:– sample of LOESS versus linear regression

1282:{\displaystyle x\mapsto {\hat {x}}:=(1,x)}

499:

485:

92:

3200:Learn how and when to remove this message

3017:"Appendix: Nonparametric Regression in R"

2806:

2691:exchange for greater experimental costs.

2644:

2629:

2610:

2573:

2532:

2504:

2494:

2483:

2482:

2458:

2457:

2448:

2437:

2436:

2403:

2367:

2361:

2340:

2329:

2328:

2304:

2303:

2301:

2276:

2265:

2264:

2239:

2228:

2227:

2203:

2202:

2197:

2174:

2144:

2138:

2112:

2092:

2057:

2056:

2038:

2023:

2022:

1987:

1958:

1957:

1955:

1917:

1897:

1871:

1850:

1839:

1838:

1835:

1814:

1808:

1784:

1750:

1710:

1682:

1676:

1655:

1643:

1623:

1603:

1569:

1541:

1535:

1497:

1477:

1447:

1436:

1435:

1422:

1400:

1390:

1380:

1369:

1368:

1355:

1342:

1331:

1306:

1300:

1250:

1249:

1241:

1214:

1210:

1209:

1206:

1185:

1181:

1180:

1177:

1157:

1136:

1132:

1131:

1116:

1095:

1091:

1090:

1087:

1052:

1017:

1007:

1002:

993:

967:

907:

887:

867:

845:

833:{\displaystyle \left(\lambda +1\right)/n}

822:

801:

781:

747:

723:

689:

69:Learn how and when to remove this message

2744:Multivariate adaptive regression splines

2296:Assuming further that the square matrix

672:is fitted to a subset of the data, with

32:This article includes a list of general

2794:

2775:

1145:{\displaystyle x,z\in \mathbb {R} ^{p}}

540:locally estimated scatterplot smoothing

421:

307:

107:

100:

3304:Python implementation (in Statsmodels)

3239:R: Local Polynomial Regression Fitting

3214:Local Regression and Election Modeling

3106:

3095:

3064:

3053:

2876:

2874:

2872:

2870:

738:, is the fraction of the total number

548:locally weighted scatterplot smoothing

3290:C implementation (from the R project)

3015:Fox, John; Weisberg, Sanford (2018).

7:

3025:An R Companion to Applied Regression

1587:{\displaystyle w_{i}(x):=w(x_{i},x)}

1026:{\displaystyle w(d)=(1-|d|^{3})^{3}}

2356:is non-singular, the loss function

2169:s. Differentiating with respect to

3314:LOESS implementation in pure Julia

1229:{\displaystyle \mathbb {R} ^{p+1}}

664:At each point in the range of the

637:; however, some authorities treat

599:(proposed 15 years before LOESS).

38:it lacks sufficient corresponding

14:

3334: This article incorporates

3329:

3149:

1194:{\displaystyle \mathbb {R} ^{p}}

1111:that depends on two parameters,

1104:{\displaystyle \mathbb {R} ^{m}}

584:. They are two strongly related

552:

466:

23:

3295:Lowess implementation in Cython

606:, such as linear and nonlinear

602:LOESS and LOWESS thus build on

414:Least-squares spectral analysis

352:Generalized estimating equation

172:Multinomial logistic regression

147:Vector generalized linear model

3118:Harrell, Frank E. Jr. (2015).

2590:

2578:

2549:

2537:

2501:

2488:

2478:

2472:

2463:

2454:

2442:

2432:

2426:

2414:

2408:

2382:

2376:

2334:

2324:

2318:

2309:

2270:

2260:

2254:

2233:

2223:

2217:

2208:

2156:

2150:

2071:

2068:

2062:

2044:

2035:

2028:

2010:

2007:

2001:

1995:

1963:

1931:

1919:

1859:{\displaystyle {\hat {x}}_{i}}

1844:

1790:

1771:

1759:

1737:

1725:

1716:

1707:

1697:

1581:

1562:

1553:

1547:

1517:

1505:

1453:

1441:

1415:

1412:

1406:

1387:

1374:

1348:

1321:

1315:

1276:

1264:

1255:

1246:

1069:

1057:

1014:

1003:

994:

984:

978:

972:

1:

2133:matrix whose entries are the

1943:{\displaystyle (p+1)\times N}

1530:real matrix of coefficients,

1523:{\displaystyle m\times (p+1)}

1289:, and consider the following

768:Since a polynomial of degree

522:, is a generalization of the

233:Nonlinear mixed-effects model

3043:Friedman, Jerome H. (1984).

676:values near the point whose

3268:moving window (with R code)

1803:. By arranging the vectors

927:Degree of local polynomials

516:local polynomial regression

435:Mean and predicted response

3370:

3045:"A Variable Span Smoother"

1972:{\displaystyle {\hat {X}}}

228:Linear mixed-effects model

3319:JavaScript implementation

3248:R: Scatter Plot Smoothing

2950:The American Statistician

2749:Non-parametric statistics

2126:{\displaystyle N\times N}

1885:{\displaystyle m\times N}

709:Localized subsets of data

586:non-parametric regression

394:Least absolute deviations

3354:Nonparametric regression

3309:LOESS Smoothing in Excel

2888:(accessed 14 April 2017)

2162:{\displaystyle w_{i}(x)}

957:tri-cube weight function

853:{\displaystyle \lambda }

758:{\displaystyle n\alpha }

608:least squares regression

142:Generalized linear model

3250:The Lowess function in

2783:Fox & Weisberg 2018

2704:finite impulse response

2395:attains its minimum at

2107:is the square diagonal

1664:{\displaystyle w=h^{2}}

915:{\displaystyle \alpha }

895:{\displaystyle \alpha }

875:{\displaystyle \alpha }

789:{\displaystyle \alpha }

731:{\displaystyle \alpha }

641:and loess as synonyms.

53:more precise citations.

3336:public domain material

3285:Fortran implementation

3241:The Loess function in

3234:Scatter Plot Smoothing

3229:Local Fitting Software

3105:Cite journal requires

3063:Cite journal requires

3028:(3rd ed.). SAGE.

2662:

2556:

2555:{\displaystyle w(x,z)}

2517:

2389:

2350:

2286:

2183:

2163:

2127:

2101:

2078:

1973:

1944:

1906:

1886:

1866:into the columns of a

1860:

1824:

1797:

1665:

1632:

1612:

1588:

1524:

1486:

1463:

1347:

1283:

1230:

1195:

1166:

1146:

1105:

1076:

1075:{\displaystyle w(x,z)}

1027:

916:

896:

876:

854:

834:

790:

759:

732:

698:

682:weighted least squares

473:Mathematics portal

399:Iteratively reweighted

90:

2978:Cleveland, William S.

2946:Cleveland, William S.

2904:Cleveland, William S.

2754:Savitzky–Golay filter

2663:

2557:

2527:A typical choice for

2518:

2390:

2351:

2287:

2184:

2164:

2128:

2102:

2079:

1974:

1945:

1907:

1887:

1861:

1825:

1823:{\displaystyle y_{i}}

1798:

1666:

1633:

1613:

1589:

1525:

1487:

1464:

1327:

1284:

1231:

1196:

1167:

1147:

1106:

1077:

1028:

917:

897:

877:

855:

835:

791:

760:

733:

699:

651:Savitzky–Golay filter

597:Savitzky–Golay filter

532:scatterplot smoothing

528:polynomial regression

430:Regression validation

409:Bayesian multivariate

126:Polynomial regression

84:

3170:improve this article

2882:"LOESS (aka LOWESS)"

2759:Segmented regression

2734:Moving least squares

2697:nonlinear regression

2572:

2531:

2402:

2360:

2300:

2196:

2173:

2137:

2111:

2091:

1986:

1954:

1916:

1896:

1870:

1834:

1807:

1675:

1642:

1622:

1602:

1534:

1496:

1476:

1299:

1240:

1205:

1176:

1156:

1115:

1086:

1082:on the target space

1051:

966:

906:

886:

866:

844:

800:

780:

746:

722:

688:

674:explanatory variable

655:William S. Cleveland

612:nonlinear regression

455:Gauss–Markov theorem

450:Studentized residual

440:Errors and residuals

274:Principal components

244:Nonlinear regression

131:General linear model

3324:Java implementation

3182:footnote references

2884:, section 4.1.4.4,

604:"classical" methods

550:), both pronounced

300:Errors-in-variables

167:Logistic regression

157:Binomial regression

102:Regression analysis

96:Part of a series on

2658:

2552:

2513:

2385:

2346:

2282:

2179:

2159:

2123:

2097:

2074:

1969:

1940:

1902:

1882:

1856:

1820:

1793:

1661:

1628:

1608:

1594:and the subscript

1584:

1520:

1482:

1459:

1279:

1226:

1191:

1162:

1142:

1101:

1072:

1023:

912:

892:

872:

850:

830:

786:

772:requires at least

755:

728:

694:

187:Multinomial probit

91:

3210:

3209:

3202:

3131:978-3-319-19425-7

3035:978-1-5443-3645-9

2857:Loess (or Lowess)

2729:Kernel regression

2651:

2491:

2466:

2445:

2337:

2312:

2273:

2236:

2211:

2182:{\displaystyle A}

2100:{\displaystyle W}

2065:

2031:

1966:

1905:{\displaystyle Y}

1847:

1631:{\displaystyle h}

1611:{\displaystyle w}

1485:{\displaystyle A}

1444:

1377:

1258:

1165:{\displaystyle p}

697:{\displaystyle n}

593:-nearest-neighbor

520:moving regression

509:

508:

162:Binary regression

121:Simple regression

116:Linear regression

79:

78:

71:

3361:

3333:

3332:

3205:

3198:

3194:

3191:

3185:

3153:

3152:

3145:

3135:

3114:

3108:

3103:

3101:

3093:

3072:

3066:

3061:

3059:

3051:

3049:

3039:

3021:

3011:

2994:(403): 596–610.

2982:Devlin, Susan J.

2973:

2941:

2916:(368): 829–836.

2889:

2878:

2865:

2852:

2846:

2845:

2834:

2828:

2827:

2816:

2810:

2804:

2798:

2792:

2786:

2780:

2667:

2665:

2664:

2659:

2657:

2653:

2652:

2650:

2649:

2648:

2635:

2634:

2633:

2611:

2561:

2559:

2558:

2553:

2522:

2520:

2519:

2514:

2512:

2511:

2499:

2498:

2493:

2492:

2484:

2468:

2467:

2459:

2453:

2452:

2447:

2446:

2438:

2394:

2392:

2391:

2386:

2372:

2371:

2355:

2353:

2352:

2347:

2345:

2344:

2339:

2338:

2330:

2314:

2313:

2305:

2291:

2289:

2288:

2283:

2281:

2280:

2275:

2274:

2266:

2244:

2243:

2238:

2237:

2229:

2213:

2212:

2204:

2188:

2186:

2185:

2180:

2168:

2166:

2165:

2160:

2149:

2148:

2132:

2130:

2129:

2124:

2106:

2104:

2103:

2098:

2083:

2081:

2080:

2075:

2067:

2066:

2058:

2043:

2042:

2033:

2032:

2024:

1978:

1976:

1975:

1970:

1968:

1967:

1959:

1949:

1947:

1946:

1941:

1911:

1909:

1908:

1903:

1891:

1889:

1888:

1883:

1865:

1863:

1862:

1857:

1855:

1854:

1849:

1848:

1840:

1829:

1827:

1826:

1821:

1819:

1818:

1802:

1800:

1799:

1794:

1789:

1788:

1755:

1754:

1715:

1714:

1687:

1686:

1670:

1668:

1667:

1662:

1660:

1659:

1637:

1635:

1634:

1629:

1617:

1615:

1614:

1609:

1593:

1591:

1590:

1585:

1574:

1573:

1546:

1545:

1529:

1527:

1526:

1521:

1491:

1489:

1488:

1483:

1468:

1466:

1465:

1460:

1452:

1451:

1446:

1445:

1437:

1427:

1426:

1405:

1404:

1395:

1394:

1385:

1384:

1379:

1378:

1370:

1360:

1359:

1346:

1341:

1311:

1310:

1288:

1286:

1285:

1280:

1260:

1259:

1251:

1235:

1233:

1232:

1227:

1225:

1224:

1213:

1200:

1198:

1197:

1192:

1190:

1189:

1184:

1171:

1169:

1168:

1163:

1151:

1149:

1148:

1143:

1141:

1140:

1135:

1110:

1108:

1107:

1102:

1100:

1099:

1094:

1081:

1079:

1078:

1073:

1032:

1030:

1029:

1024:

1022:

1021:

1012:

1011:

1006:

997:

921:

919:

918:

913:

901:

899:

898:

893:

881:

879:

878:

873:

859:

857:

856:

851:

839:

837:

836:

831:

826:

821:

817:

796:must be between

795:

793:

792:

787:

764:

762:

761:

756:

737:

735:

734:

729:

703:

701:

700:

695:

645:Model definition

580:

575:

574:

571:

570:

567:

564:

561:

558:

518:, also known as

512:Local regression

501:

494:

487:

471:

470:

378:Ridge regression

213:Multilevel model

93:

74:

67:

63:

60:

54:

49:this article by

40:inline citations

27:

26:

19:

3369:

3368:

3364:

3363:

3362:

3360:

3359:

3358:

3344:

3343:

3330:

3281:

3279:Implementations

3206:

3195:

3189:

3186:

3167:

3158:This article's

3154:

3150:

3143:

3138:

3132:

3117:

3104:

3094:

3082:10.2172/1367799

3075:

3062:

3052:

3047:

3042:

3036:

3019:

3014:

3000:10.2307/2289282

2976:

2962:10.2307/2683591

2944:

2922:10.2307/2286407

2902:

2898:

2893:

2892:

2879:

2868:

2853:

2849:

2836:

2835:

2831:

2818:

2817:

2813:

2805:

2801:

2793:

2789:

2781:

2777:

2772:

2767:

2720:

2688:

2675:

2640:

2636:

2625:

2612:

2606:

2602:

2570:

2569:

2564:Gaussian weight

2529:

2528:

2500:

2481:

2435:

2400:

2399:

2363:

2358:

2357:

2327:

2298:

2297:

2263:

2226:

2194:

2193:

2171:

2170:

2140:

2135:

2134:

2109:

2108:

2089:

2088:

2034:

1984:

1983:

1952:

1951:

1914:

1913:

1894:

1893:

1868:

1867:

1837:

1832:

1831:

1810:

1805:

1804:

1780:

1746:

1706:

1678:

1673:

1672:

1651:

1640:

1639:

1620:

1619:

1600:

1599:

1565:

1537:

1532:

1531:

1494:

1493:

1474:

1473:

1434:

1418:

1396:

1386:

1367:

1351:

1302:

1297:

1296:

1238:

1237:

1208:

1203:

1202:

1179:

1174:

1173:

1154:

1153:

1130:

1113:

1112:

1089:

1084:

1083:

1049:

1048:

1013:

1001:

964:

963:

942:

940:Weight function

929:

904:

903:

884:

883:

864:

863:

842:

841:

807:

803:

798:

797:

778:

777:

744:

743:

720:

719:

711:

686:

685:

659:Susan J. Devlin

647:

578:

555:

551:

505:

465:

445:Goodness of fit

152:Discrete choice

75:

64:

58:

55:

45:Please help to

44:

28:

24:

17:

12:

11:

5:

3367:

3365:

3357:

3356:

3346:

3345:

3327:

3326:

3321:

3316:

3311:

3306:

3301:

3292:

3287:

3280:

3277:

3276:

3275:

3269:

3262:Quantile LOESS

3259:

3254:

3245:

3236:

3231:

3226:

3221:

3216:

3208:

3207:

3162:external links

3157:

3155:

3148:

3142:

3141:External links

3139:

3137:

3136:

3130:

3115:

3107:|journal=

3073:

3065:|journal=

3040:

3034:

3012:

2974:

2942:

2899:

2897:

2894:

2891:

2890:

2866:

2862:Nutrient Steps

2847:

2842:Docs.scipy.org

2829:

2811:

2807:Garimella 2017

2799:

2787:

2774:

2773:

2771:

2768:

2766:

2763:

2762:

2761:

2756:

2751:

2746:

2741:

2739:Moving average

2736:

2731:

2726:

2719:

2716:

2687:

2684:

2674:

2671:

2670:

2669:

2656:

2647:

2643:

2639:

2632:

2628:

2624:

2621:

2618:

2615:

2609:

2605:

2601:

2598:

2595:

2592:

2589:

2586:

2583:

2580:

2577:

2551:

2548:

2545:

2542:

2539:

2536:

2525:

2524:

2510:

2507:

2503:

2497:

2490:

2487:

2480:

2477:

2474:

2471:

2465:

2462:

2456:

2451:

2444:

2441:

2434:

2431:

2428:

2425:

2422:

2419:

2416:

2413:

2410:

2407:

2384:

2381:

2378:

2375:

2370:

2366:

2343:

2336:

2333:

2326:

2323:

2320:

2317:

2311:

2308:

2294:

2293:

2279:

2272:

2269:

2262:

2259:

2256:

2253:

2250:

2247:

2242:

2235:

2232:

2225:

2222:

2219:

2216:

2210:

2207:

2201:

2178:

2158:

2155:

2152:

2147:

2143:

2122:

2119:

2116:

2096:

2085:

2084:

2073:

2070:

2064:

2061:

2055:

2052:

2049:

2046:

2041:

2037:

2030:

2027:

2021:

2018:

2015:

2012:

2009:

2006:

2003:

2000:

1997:

1994:

1991:

1965:

1962:

1939:

1936:

1933:

1930:

1927:

1924:

1921:

1901:

1881:

1878:

1875:

1853:

1846:

1843:

1817:

1813:

1792:

1787:

1783:

1779:

1776:

1773:

1770:

1767:

1764:

1761:

1758:

1753:

1749:

1745:

1742:

1739:

1736:

1733:

1730:

1727:

1724:

1721:

1718:

1713:

1709:

1705:

1702:

1699:

1696:

1693:

1690:

1685:

1681:

1658:

1654:

1650:

1647:

1627:

1607:

1583:

1580:

1577:

1572:

1568:

1564:

1561:

1558:

1555:

1552:

1549:

1544:

1540:

1519:

1516:

1513:

1510:

1507:

1504:

1501:

1481:

1470:

1469:

1458:

1455:

1450:

1443:

1440:

1433:

1430:

1425:

1421:

1417:

1414:

1411:

1408:

1403:

1399:

1393:

1389:

1383:

1376:

1373:

1366:

1363:

1358:

1354:

1350:

1345:

1340:

1337:

1334:

1330:

1326:

1323:

1320:

1317:

1314:

1309:

1305:

1278:

1275:

1272:

1269:

1266:

1263:

1257:

1254:

1248:

1245:

1223:

1220:

1217:

1212:

1188:

1183:

1161:

1139:

1134:

1129:

1126:

1123:

1120:

1098:

1093:

1071:

1068:

1065:

1062:

1059:

1056:

1034:

1033:

1020:

1016:

1010:

1005:

1000:

996:

992:

989:

986:

983:

980:

977:

974:

971:

941:

938:

934:moving average

928:

925:

911:

891:

871:

849:

829:

825:

820:

816:

813:

810:

806:

785:

754:

751:

727:

710:

707:

693:

646:

643:

524:moving average

507:

506:

504:

503:

496:

489:

481:

478:

477:

476:

475:

460:

459:

458:

457:

452:

447:

442:

437:

432:

424:

423:

419:

418:

417:

416:

411:

406:

401:

396:

388:

387:

386:

385:

380:

375:

370:

365:

357:

356:

355:

354:

349:

344:

339:

331:

330:

329:

328:

323:

318:

310:

309:

305:

304:

303:

302:

294:

293:

292:

291:

286:

281:

276:

271:

266:

261:

256:

254:Semiparametric

251:

246:

238:

237:

236:

235:

230:

225:

223:Random effects

220:

215:

207:

206:

205:

204:

199:

197:Ordered probit

194:

189:

184:

179:

174:

169:

164:

159:

154:

149:

144:

136:

135:

134:

133:

128:

123:

118:

110:

109:

105:

104:

98:

97:

77:

76:

31:

29:

22:

15:

13:

10:

9:

6:

4:

3:

2:

3366:

3355:

3352:

3351:

3349:

3342:

3341:

3338:from the

3337:

3325:

3322:

3320:

3317:

3315:

3312:

3310:

3307:

3305:

3302:

3300:

3296:

3293:

3291:

3288:

3286:

3283:

3282:

3278:

3273:

3270:

3267:

3263:

3260:

3258:

3255:

3253:

3249:

3246:

3244:

3240:

3237:

3235:

3232:

3230:

3227:

3225:

3222:

3220:

3217:

3215:

3212:

3211:

3204:

3201:

3193:

3190:November 2021

3183:

3179:

3178:inappropriate

3175:

3171:

3165:

3163:

3156:

3147:

3146:

3140:

3133:

3127:

3123:

3122:

3116:

3112:

3099:

3091:

3087:

3083:

3079:

3074:

3070:

3057:

3046:

3041:

3037:

3031:

3027:

3026:

3018:

3013:

3009:

3005:

3001:

2997:

2993:

2989:

2988:

2983:

2979:

2975:

2971:

2967:

2963:

2959:

2955:

2951:

2947:

2943:

2939:

2935:

2931:

2927:

2923:

2919:

2915:

2911:

2910:

2905:

2901:

2900:

2895:

2887:

2883:

2877:

2875:

2873:

2871:

2867:

2863:

2859:

2858:

2851:

2848:

2843:

2839:

2833:

2830:

2825:

2824:Mathworks.com

2821:

2815:

2812:

2808:

2803:

2800:

2797:, p. 29.

2796:

2791:

2788:

2784:

2779:

2776:

2769:

2764:

2760:

2757:

2755:

2752:

2750:

2747:

2745:

2742:

2740:

2737:

2735:

2732:

2730:

2727:

2725:

2722:

2721:

2717:

2715:

2713:

2709:

2705:

2700:

2698:

2692:

2686:Disadvantages

2685:

2683:

2679:

2672:

2654:

2645:

2641:

2637:

2630:

2622:

2619:

2616:

2607:

2603:

2599:

2596:

2593:

2587:

2584:

2581:

2575:

2568:

2567:

2566:

2565:

2546:

2543:

2540:

2534:

2508:

2505:

2495:

2485:

2475:

2469:

2460:

2449:

2439:

2429:

2423:

2420:

2417:

2411:

2405:

2398:

2397:

2396:

2379:

2373:

2368:

2364:

2341:

2331:

2321:

2315:

2306:

2277:

2267:

2257:

2251:

2248:

2245:

2240:

2230:

2220:

2214:

2205:

2199:

2192:

2191:

2190:

2176:

2153:

2145:

2141:

2120:

2117:

2114:

2094:

2059:

2053:

2050:

2047:

2039:

2025:

2019:

2016:

2013:

2004:

1998:

1992:

1989:

1982:

1981:

1980:

1960:

1937:

1934:

1928:

1925:

1922:

1899:

1879:

1876:

1873:

1851:

1841:

1815:

1811:

1785:

1781:

1777:

1774:

1768:

1765:

1762:

1756:

1751:

1747:

1743:

1740:

1734:

1731:

1728:

1722:

1719:

1711:

1703:

1700:

1694:

1691:

1688:

1683:

1679:

1656:

1652:

1648:

1645:

1625:

1605:

1597:

1578:

1575:

1570:

1566:

1559:

1556:

1550:

1542:

1538:

1514:

1511:

1508:

1502:

1499:

1479:

1456:

1448:

1438:

1431:

1428:

1423:

1419:

1409:

1401:

1397:

1391:

1381:

1371:

1364:

1361:

1356:

1352:

1343:

1338:

1335:

1332:

1328:

1324:

1318:

1312:

1307:

1303:

1295:

1294:

1293:

1292:

1291:loss function

1273:

1270:

1267:

1261:

1252:

1243:

1221:

1218:

1215:

1186:

1159:

1137:

1127:

1124:

1121:

1118:

1096:

1066:

1063:

1060:

1054:

1045:

1041:

1039:

1018:

1008:

998:

990:

987:

981:

975:

969:

962:

961:

960:

958:

953:

951:

948:

939:

937:

935:

926:

924:

909:

889:

869:

861:

847:

827:

823:

818:

814:

811:

808:

804:

783:

775:

771:

766:

752:

749:

741:

725:

716:

708:

706:

691:

683:

679:

675:

671:

668:a low-degree

667:

662:

660:

656:

652:

644:

642:

640:

636:

632:

628:

624:

619:

615:

613:

609:

605:

600:

598:

594:

592:

587:

583:

582:

573:

549:

545:

541:

537:

533:

529:

525:

521:

517:

513:

502:

497:

495:

490:

488:

483:

482:

480:

479:

474:

469:

464:

463:

462:

461:

456:

453:

451:

448:

446:

443:

441:

438:

436:

433:

431:

428:

427:

426:

425:

420:

415:

412:

410:

407:

405:

402:

400:

397:

395:

392:

391:

390:

389:

384:

381:

379:

376:

374:

371:

369:

366:

364:

361:

360:

359:

358:

353:

350:

348:

345:

343:

340:

338:

335:

334:

333:

332:

327:

324:

322:

319:

317:

316:Least squares

314:

313:

312:

311:

306:

301:

298:

297:

296:

295:

290:

287:

285:

282:

280:

277:

275:

272:

270:

267:

265:

262:

260:

257:

255:

252:

250:

249:Nonparametric

247:

245:

242:

241:

240:

239:

234:

231:

229:

226:

224:

221:

219:

218:Fixed effects

216:

214:

211:

210:

209:

208:

203:

200:

198:

195:

193:

192:Ordered logit

190:

188:

185:

183:

180:

178:

175:

173:

170:

168:

165:

163:

160:

158:

155:

153:

150:

148:

145:

143:

140:

139:

138:

137:

132:

129:

127:

124:

122:

119:

117:

114:

113:

112:

111:

106:

103:

99:

95:

94:

88:

83:

73:

70:

62:

52:

48:

42:

41:

35:

30:

21:

20:

3328:

3265:

3196:

3187:

3172:by removing

3159:

3124:. Springer.

3120:

3098:cite journal

3056:cite journal

3024:

2991:

2985:

2953:

2949:

2913:

2907:

2885:

2864:, July 2016.

2861:

2855:

2850:

2841:

2832:

2823:

2814:

2802:

2795:Harrell 2015

2790:

2778:

2701:

2693:

2689:

2680:

2676:

2526:

2295:

2086:

1595:

1471:

1290:

1046:

1042:

1037:

1035:

954:

943:

930:

862:

840:and 1, with

773:

769:

767:

739:

714:

712:

663:

648:

638:

635:lowess curve

634:

626:

622:

620:

616:

601:

590:

547:

543:

539:

535:

519:

515:

511:

510:

373:Non-negative

283:

65:

56:

37:

2785:, Appendix.

631:scattergram

623:loess curve

383:Regularized

347:Generalized

279:Least angle

177:Mixed logit

51:introducing

3299:Carl Vogel

2765:References

2673:Advantages

1638:such that

670:polynomial

422:Background

326:Non-linear

308:Estimation

34:references

3174:excessive

2956:(1): 54.

2770:Citations

2642:α

2627:‖

2620:−

2614:‖

2608:−

2600:

2506:−

2489:^

2464:^

2443:^

2374:

2335:^

2310:^

2271:^

2234:^

2209:^

2118:×

2063:^

2051:−

2029:^

2017:−

1993:

1964:^

1935:×

1877:×

1845:^

1769:

1735:

1503:×

1442:^

1429:−

1375:^

1362:−

1329:∑

1313:

1256:^

1247:↦

1128:∈

991:−

950:estimates

947:parameter

910:α

890:α

870:α

848:λ

809:λ

784:α

753:α

726:α

289:Segmented

87:sine wave

59:June 2011

3348:Category

3266:Quantile

2718:See also

2712:outliers

678:response

666:data set

404:Bayesian

342:Weighted

337:Ordinary

269:Isotonic

264:Quantile

3168:Please

3160:use of

3090:1367799

3008:2289282

2970:2683591

2938:0556476

2930:2286407

2896:Sources

2562:is the

1950:matrix

1912:and an

1892:matrix

715:subsets

363:Partial

202:Poisson

47:improve

3128:

3088:

3032:

3006:

2968:

2936:

2928:

2880:NIST,

2708:robust

2087:where

1492:is an

1472:Here,

1036:where

639:lowess

629:-axis

544:LOWESS

542:) and

534:, are

321:Linear

259:Robust

182:Probit

108:Models

36:, but

3048:(PDF)

3020:(PDF)

3004:JSTOR

2966:JSTOR

2926:JSTOR

1201:into

536:LOESS

368:Total

284:Local

3126:ISBN

3111:help

3086:OSTI

3069:help

3030:ISBN

1830:and

713:The

581:-ess

526:and

3297:by

3176:or

3078:doi

2996:doi

2958:doi

2918:doi

2597:exp

2365:RSS

1304:RSS

1236:as

579:LOH

514:or

3350::

3102::

3100:}}

3096:{{

3084:.

3060::

3058:}}

3054:{{

3022:.

3002:.

2992:83

2990:.

2980:;

2964:.

2954:35

2952:.

2934:MR

2932:.

2924:.

2914:74

2912:.

2869:^

2860:,

2840:.

2822:.

1990:Tr

1766:Tr

1732:Tr

1557::=

1262::=

959:,

952:.

653:.

563:oʊ

3252:R

3243:R

3203:)

3197:(

3192:)

3188:(

3184:.

3166:.

3134:.

3113:)

3109:(

3092:.

3080::

3071:)

3067:(

3038:.

3010:.

2998::

2972:.

2960::

2940:.

2920::

2844:.

2826:.

2809:.

2668:.

2655:)

2646:2

2638:2

2631:2

2623:z

2617:x

2604:(

2594:=

2591:)

2588:z

2585:,

2582:x

2579:(

2576:w

2550:)

2547:z

2544:,

2541:x

2538:(

2535:w

2523:.

2509:1

2502:)

2496:T

2486:X

2479:)

2476:x

2473:(

2470:W

2461:X

2455:(

2450:T

2440:X

2433:)

2430:x

2427:(

2424:W

2421:Y

2418:=

2415:)

2412:x

2409:(

2406:A

2383:)

2380:A

2377:(

2369:x

2342:T

2332:X

2325:)

2322:x

2319:(

2316:W

2307:X

2292:.

2278:T

2268:X

2261:)

2258:x

2255:(

2252:W

2249:Y

2246:=

2241:T

2231:X

2224:)

2221:x

2218:(

2215:W

2206:X

2200:A

2177:A

2157:)

2154:x

2151:(

2146:i

2142:w

2121:N

2115:N

2095:W

2072:)

2069:)

2060:X

2054:A

2048:Y

2045:(

2040:T

2036:)

2026:X

2020:A

2014:Y

2011:(

2008:)

2005:x

2002:(

1999:W

1996:(

1961:X

1938:N

1932:)

1929:1

1926:+

1923:p

1920:(

1900:Y

1880:N

1874:m

1852:i

1842:x

1816:i

1812:y

1791:)

1786:T

1782:y

1778:y

1775:w

1772:(

1763:=

1760:)

1757:h

1752:T

1748:y

1744:y

1741:h

1738:(

1729:=

1726:)

1723:y

1720:h

1717:(

1712:T

1708:)

1704:y

1701:h

1698:(

1695:=

1692:y

1689:w

1684:T

1680:y

1657:2

1653:h

1649:=

1646:w

1626:h

1606:w

1596:i

1582:)

1579:x

1576:,

1571:i

1567:x

1563:(

1560:w

1554:)

1551:x

1548:(

1543:i

1539:w

1518:)

1515:1

1512:+

1509:p

1506:(

1500:m

1480:A

1457:.

1454:)

1449:i

1439:x

1432:A

1424:i

1420:y

1416:(

1413:)

1410:x

1407:(

1402:i

1398:w

1392:T

1388:)

1382:i

1372:x

1365:A

1357:i

1353:y

1349:(

1344:N

1339:1

1336:=

1333:i

1325:=

1322:)

1319:A

1316:(

1308:x

1277:)

1274:x

1271:,

1268:1

1265:(

1253:x

1244:x

1222:1

1219:+

1216:p

1211:R

1187:p

1182:R

1160:p

1138:p

1133:R

1125:z

1122:,

1119:x

1097:m

1092:R

1070:)

1067:z

1064:,

1061:x

1058:(

1055:w

1038:d

1019:3

1015:)

1009:3

1004:|

999:d

995:|

988:1

985:(

982:=

979:)

976:d

973:(

970:w

828:n

824:/

819:)

815:1

812:+

805:(

774:k

770:k

750:n

740:n

692:n

627:y

591:k

572:/

569:s

566:ɛ

560:l

557:ˈ

554:/

546:(

538:(

500:e

493:t

486:v

72:)

66:(

61:)

57:(

43:.

Text is available under the Creative Commons Attribution-ShareAlike License. Additional terms may apply.