323:(if any), and are thus relatively cheap to create and destroy. Thread switching is also relatively cheap: it requires a context switch (saving and restoring registers and stack pointer), but does not change virtual memory and is thus cache-friendly (leaving TLB valid). The kernel can assign one or more software threads to each core in a CPU (it being able to assign itself multiple software threads depending on its support for multithreading), and can swap out threads that get blocked. However, kernel threads take much longer than user threads to be swapped.

61:

1248:(GIL). The GIL is a mutual exclusion lock held by the interpreter that can prevent the interpreter from simultaneously interpreting the application's code on two or more threads at once. This effectively limits the parallelism on multiple core systems. It also limits performance for processor-bound threads (which require the processor), but doesn't effect I/O-bound or network-bound ones as much. Other implementations of interpreted programming languages, such as

153:

50:

2654:

801:" threading systems are more complex to implement than either kernel or user threads, because changes to both kernel and user-space code are required. In the M:N implementation, the threading library is responsible for scheduling user threads on the available schedulable entities; this makes context switching of threads very fast, as it avoids system calls. However, this increases complexity and the likelihood of

1291:

742::1 model implies that all application-level threads map to one kernel-level scheduled entity; the kernel has no knowledge of the application threads. With this approach, context switching can be done very quickly and, in addition, it can be implemented even on simple kernels which do not support threading. One of the major drawbacks, however, is that it cannot benefit from the hardware acceleration on

363:

problem is when performing I/O: most programs are written to perform I/O synchronously. When an I/O operation is initiated, a system call is made, and does not return until the I/O operation has been completed. In the intervening period, the entire process is "blocked" by the kernel and cannot run, which starves other user threads and fibers in the same process from executing.

1135:. In general, multithreaded programs are non-deterministic, and as a result, are untestable. In other words, a multithreaded program can easily have bugs which never manifest on a test system, manifesting only in production. This can be alleviated by restricting inter-thread communications to certain well-defined patterns (such as message-passing).

885:

implement LWPs as kernel threads (1:1 model). SunOS 5.2 through SunOS 5.8 as well as NetBSD 2 to NetBSD 4 implemented a two level model, multiplexing one or more user level threads on each kernel thread (M:N model). SunOS 5.9 and later, as well as NetBSD 5 eliminated user threads support, returning

366:

A common solution to this problem (used, in particular, by many green threads implementations) is providing an I/O API that implements an interface that blocks the calling thread, rather than the entire process, by using non-blocking I/O internally, and scheduling another user thread or fiber while

358:

As user thread implementations are typically entirely in userspace, context switching between user threads within the same process is extremely efficient because it does not require any interaction with the kernel at all: a context switch can be performed by locally saving the CPU registers used by

1032:

where a set number of threads are created at startup that then wait for a task to be assigned. When a new task arrives, it wakes up, completes the task and goes back to waiting. This avoids the relatively expensive thread creation and destruction functions for every task performed and takes thread

921:

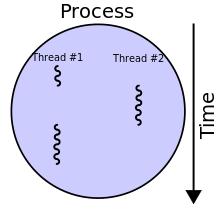

Multithreading is mainly found in multitasking operating systems. Multithreading is a widespread programming and execution model that allows multiple threads to exist within the context of one process. These threads share the process's resources, but are able to execute independently. The threaded

1128:

has written: "Although threads seem to be a small step from sequential computation, in fact, they represent a huge step. They discard the most essential and appealing properties of sequential computation: understandability, predictability, and determinism. Threads, as a model of computation, are

933:

Multithreading libraries tend to provide a function call to create a new thread, which takes a function as a parameter. A concurrent thread is then created which starts running the passed function and ends when the function returns. The thread libraries also offer data synchronization functions.

362:

However, the use of blocking system calls in user threads (as opposed to kernel threads) can be problematic. If a user thread or a fiber performs a system call that blocks, the other user threads and fibers in the process are unable to run until the system call returns. A typical example of this

367:

the I/O operation is in progress. Similar solutions can be provided for other blocking system calls. Alternatively, the program can be written to avoid the use of synchronous I/O or other blocking system calls (in particular, using non-blocking I/O, including lambda continuations and/or async/

1067:: applications looking to use multicore or multi-CPU systems can use multithreading to split data and tasks into parallel subtasks and let the underlying architecture manage how the threads run, either concurrently on one core or in parallel on multiple cores. GPU computing environments like

233:, which share the process's resources, such as memory and file handles – a process is a unit of resources, while a thread is a unit of scheduling and execution. Kernel scheduling is typically uniformly done preemptively or, less commonly, cooperatively. At the user level a process such as a

400:. A fiber can be scheduled to run in any thread in the same process. This permits applications to gain performance improvements by managing scheduling themselves, instead of relying on the kernel scheduler (which may not be tuned for the application). Some research implementations of the

1212:

languages, expose threading to developers while abstracting the platform specific differences in threading implementations in the runtime. Several other programming languages and language extensions also try to abstract the concept of concurrency and threading from the developer fully

302:

is a "lightweight" unit of kernel scheduling. At least one kernel thread exists within each process. If multiple kernel threads exist within a process, then they share the same memory and file resources. Kernel threads are preemptively multitasked if the operating system's process

194:

The use of threads in software applications became more common in the early 2000s as CPUs began to utilize multiple cores. Applications wishing to take advantage of multiple cores for performance advantages were required to employ concurrency to utilize the multiple cores.

886:

to a 1:1 model. FreeBSD 5 implemented M:N model. FreeBSD 6 supported both 1:1 and M:N, users could choose which one should be used with a given program using /etc/libmap.conf. Starting with FreeBSD 7, the 1:1 became the default. FreeBSD 8 no longer supports the M:N model.

1052:

that runs concurrently with the main execution thread, it is possible for the application to remain responsive to user input while executing tasks in the background. On the other hand, in most cases multithreading is not the only way to keep a program responsive, with

359:

the currently executing user thread or fiber and then loading the registers required by the user thread or fiber to be executed. Since scheduling occurs in userspace, the scheduling policy can be more easily tailored to the requirements of the program's workload.

962:

if they require more than one CPU instruction to update: two threads may end up attempting to update the data structure at the same time and find it unexpectedly changing underfoot. Bugs caused by race conditions can be very difficult to reproduce and isolate.

1141:. As thread context switch on modern CPUs can cost up to 1 million CPU cycles, it makes writing efficient multithreading programs difficult. In particular, special attention has to be paid to avoid inter-thread synchronization from being too frequent.

651:

usually occurs frequently enough that users perceive the threads or tasks as running in parallel (for popular server/desktop operating systems, maximum time slice of a thread, when other threads are waiting, is often limited to 100–200ms). On a

1048:: multithreading can allow an application to remain responsive to input. In a one-thread program, if the main execution thread blocks on a long-running task, the entire application can appear to freeze. By moving such long-running tasks to a

1267:

in parallel using only its ID to find its data in memory. In essence, the application must be designed so that each thread performs the same operation on different segments of memory so that they can operate in parallel and use the GPU

524:: due to threads sharing the same address space, an illegal operation performed by a thread can crash the entire process; therefore, one misbehaving thread can disrupt the processing of all the other threads in the application.

750:

computers: there is never more than one thread being scheduled at the same time. For example: If one of the threads needs to execute an I/O request, the whole process is blocked and the threading advantage cannot be used. The

982:

data structures against concurrent access. On uniprocessor systems, a thread running into a locked mutex must sleep and hence trigger a context switch. On multi-processor systems, the thread may instead poll the mutex in a

282:. Creating or destroying a process is relatively expensive, as resources must be acquired or released. Processes are typically preemptively multitasked, and process switching is relatively expensive, beyond basic cost of

1162:) as early as in the late 1960s, and this was continued in the Optimizing Compiler and later versions. The IBM Enterprise PL/I compiler introduced a new model "thread" API. Neither version was part of the PL/I standard.

1177:(Pthreads), which is a set of C-function library calls. OS vendors are free to implement the interface as desired, but the application developer should be able to use the same interface across multiple platforms. Most

278:, and do not share address spaces or file resources except through explicit methods such as inheriting file handles or shared memory segments, or mapping the same file in a shared way – see

1252:

using the Thread extension, avoid the GIL limit by using an

Apartment model where data and code must be explicitly "shared" between threads. In Tcl each thread has one or more interpreters.

237:

can itself schedule multiple threads of execution. If these do not share data, as in Erlang, they are usually analogously called processes, while if they share data they are usually called

1980:

1543:

339:. The kernel is unaware of them, so they are managed and scheduled in userspace. Some implementations base their user threads on top of several kernel threads, to benefit from

247:; different processes may schedule user threads differently. User threads may be executed by kernel threads in various ways (one-to-one, many-to-one, many-to-many). The term "

2744:

286:, due to issues such as cache flushing (in particular, process switching changes virtual memory addressing, causing invalidation and thus flushing of an untagged

2070:

1083:. This, in turn, enables better system utilization, and (provided that synchronization costs don't eat the benefits up), can provide faster program execution.

1922:

2691:

1033:

management out of the application developer's hand and leaves it to a library or the operating system that is better suited to optimize thread management.

119:

922:

programming model provides developers with a useful abstraction of concurrent execution. Multithreading can also be applied to one process to enable

1116:) to prevent common data from being read or overwritten in one thread while being modified by another. Careless use of such primitives can lead to

1173:

support threading, and provide access to the native threading APIs of the operating system. A standardized interface for thread implementation is

122:, while different processes do not share these resources. In particular, the threads of a process share its executable code and the values of its

683:

Threads created by the user in a 1:1 correspondence with schedulable entities in the kernel are the simplest possible threading implementation.

2051:

28:

1660:

3020:

2991:

1726:

1695:

1581:

1520:

2318:

805:, as well as suboptimal scheduling without extensive (and expensive) coordination between the userland scheduler and the kernel scheduler.

1225:(MPI)). Some languages are designed for sequential parallelism instead (especially using GPUs), without requiring concurrency or threads (

2341:

2230:

1325:

1310:

743:

258:

is a "heavyweight" unit of kernel scheduling, as creating, destroying, and switching processes is relatively expensive. Processes own

598:, although threads were still used on such computers because switching between threads was generally still quicker than full-process

2336:

2313:

1894:

1880:

1866:

1852:

1838:

1644:

1438:

967:

2795:

2739:

1915:

1557:

Ferat, Manuel; Pereira, Romain; Roussel, Adrien; Carribault, Patrick; Steffenel, Luiz-Angelo; Gautier, Thierry (September 2022).

2714:

2684:

2308:

2123:

1193:

2907:

2805:

2415:

2278:

1360:

856:

504:

of threads: using threads, an application can operate using fewer resources than it would need when using multiple processes.

3206:

3185:

2734:

2719:

2639:

2473:

2091:

2011:

1271:

1205:

583:

3211:

2780:

2765:

2724:

1568:. IWOMP 2022: 18th International Workshop on OpenMP. Lecture Notes in Computer Science. Vol. 13527. pp. 3–16.

907:

571:

491:

408:, with the distinction being that coroutines are a language-level construct, while fibers are a system-level construct.

287:

789:

number of kernel entities, or "virtual processors." This is a compromise between kernel-level ("1:1") and user-level ("

2946:

2893:

2658:

2604:

2064:

1908:

1345:

1296:

1201:

1117:

955:

951:

700:

607:

579:

515:

453:

440:

259:

2961:

2800:

2677:

2583:

2378:

2263:

2225:

2075:

1965:

1558:

832:

708:

567:

279:

558:. However, preemptive scheduling may context-switch threads at moments unanticipated by programmers, thus causing

2996:

2815:

2775:

2770:

2729:

2599:

2578:

2523:

2410:

2400:

2373:

2235:

1305:

1260:

1222:

1011:

988:

971:

551:

3039:

2926:

2790:

2553:

2179:

2118:

2031:

1166:

852:

543:

432:

389:

385:

380:

243:

208:

60:

1685:

2468:

1104:

and other non-intuitive behaviors. In order for data to be correctly manipulated, threads will often need to

2785:

2614:

2609:

2059:

1245:

1113:

1007:

992:

979:

640:

251:" variously refers to user threads or to kernel mechanisms for scheduling user threads onto kernel threads.

40:

1181:

platforms, including Linux, support

Pthreads. Microsoft Windows has its own set of thread functions in the

3173:

3112:

3001:

2981:

2930:

2888:

2353:

2285:

2189:

2081:

2036:

1537:

1330:

1320:

1278:

have a different threading model that supports extremely large numbers of threads (for modeling hardware).

915:

636:

539:

393:

304:

204:

115:

100:

77:

73:

2143:

2956:

2922:

2824:

2760:

2445:

2405:

2358:

2348:

2086:

2006:

1945:

1315:

814:

417:

267:

184:

96:

54:

1486:

1385:

664:, with every processor or core executing a separate thread simultaneously; on a processor or core with

1811:

3153:

3127:

2385:

2273:

2268:

2258:

2245:

2041:

1718:

1632:

1458:

895:

873:

828:

752:

575:

320:

248:

127:

1522:

Eight Ways to Handle Non-blocking

Returns in Message-passing Programs: from C++98 via C++11 to C++20

1244:

for Python) which support threading and concurrency but not parallel execution of threads, due to a

1129:

wildly non-deterministic, and the job of the programmer becomes one of pruning that nondeterminism."

462:

between threads in the same process typically occurs faster than context switching between processes

3122:

3074:

2951:

2548:

2503:

2329:

2324:

2303:

2169:

1796:

1791:

1771:

1753:

1748:

1667:

1426:

1350:

1029:

1023:

999:

950:

Threads in the same process share the same address space. This allows concurrently running code to

903:

435:

information than threads, whereas multiple threads within a process share process state as well as

421:

220:

188:

137:

108:

68:

1606:

482:

processes; in other operating systems there is not so great a difference except in the cost of an

3059:

2573:

2422:

2395:

2220:

2184:

1975:

1955:

1950:

1931:

1587:

1408:

1335:

1105:

923:

802:

661:

563:

312:

263:

2133:

594:

Until the early 2000s, most desktop computers had only one single-core CPU, with no support for

1559:"Enhancing MPI+OpenMP Task Based Applications for Heterogeneous Architectures with GPU support"

404:

parallel programming model implement their tasks through fibers. Closely related to fibers are

3168:

3117:

3049:

3006:

2847:

2619:

2295:

2253:

2148:

1890:

1876:

1862:

1848:

1834:

1786:

1722:

1712:

1691:

1640:

1577:

1434:

914:

can be used differently to mean "backtracking within a single thread", which is common in the

283:

275:

123:

396:" to allow another fiber to run, which makes their implementation much easier than kernel or

46:

Smallest sequence of programmed instructions that can be managed independently by a scheduler

3148:

2700:

2629:

2428:

2363:

2210:

2026:

2021:

2016:

1985:

1569:

1400:

1109:

1096:

complexity and related bugs: when using shared resources typical for threaded programs, the

1076:

1054:

1003:

670:, separate software threads can also be executed concurrently by separate hardware threads.

547:

436:

241:, particularly if preemptively scheduled. Cooperatively scheduled user threads are known as

104:

88:

64:

1505:

99:

is the smallest sequence of programmed instructions that can be managed independently by a

3092:

3054:

3025:

2493:

2433:

2368:

2215:

2205:

2138:

1970:

1960:

927:

747:

666:

616:

595:

511:

348:

340:

316:

130:

2128:

1614:. The 28th International Conference on Parallel Architectures and Compilation Techniques.

17:

3178:

3102:

3064:

2936:

2624:

2440:

2097:

1990:

1381:

1264:

1209:

1197:

1121:

1101:

959:

653:

648:

599:

555:

459:

234:

203:

Scheduling can be done at the kernel level or user level, and multitasking can be done

81:

262:

allocated by the operating system. Resources include memory (for both code and data),

152:

49:

3200:

3044:

2883:

2837:

2513:

2390:

1591:

1454:

1386:"How to Make a Multiprocessor Computer That Correctly Executes Multiprocess Programs"

1355:

1174:

1125:

945:

882:

817:

used by older versions of the NetBSD native POSIX threads library implementation (an

756:

696:

483:

446:

352:

36:

1108:

in time in order to process the data in the correct order. Threads may also require

958:. When shared between threads, however, even simple data structures become prone to

2971:

2113:

1412:

1340:

1058:

704:

629:

27:

This article is about the concurrency concept. For multithreading in hardware, see

1605:

Iwasaki, Shintaro; Amer, Abdelhalim; Taura, Kenjiro; Seo, Sangmin; Balaji, Pavan.

1481:

1041:

Multithreaded applications have the following advantages vs single-threaded ones:

586:

other threads by not yielding control of execution during intensive computation.

570:

relies on threads to relinquish control of execution, thus ensuring that threads

3097:

3079:

2862:

2852:

2842:

2634:

1573:

1477:

1290:

1186:

954:

tightly and conveniently exchange data without the overhead or complexity of an

559:

428:

processes are typically independent, while threads exist as subsets of a process

518:(IPC), threads can communicate through data, code and files they already share.

1286:

1097:

846:

657:

467:

332:

308:

32:

3034:

2941:

2867:

2832:

2508:

2483:

1404:

1182:

621:

611:

405:

1482:"The Free Lunch Is Over: A Fundamental Turn Toward Concurrency in Software"

1743:

3163:

2558:

2538:

2463:

1237:

1226:

1080:

984:

859:

uses lightweight threads which are scheduled on operating system threads.

1075:

use the multithreading model where dozens to hundreds of threads run in

3158:

3087:

2857:

2563:

2543:

2518:

2153:

1566:

OpenMP in a Modern World: From Multi-device

Support to Meta Programming

1275:

1241:

716:

635:

Systems with a single processor generally implement multithreading by

2533:

2528:

1900:

1218:

1072:

878:

712:

401:

2669:

1236:

A few interpreted programming languages have implementations (e.g.,

1170:

825:

model as opposed to a 1:1 kernel or userspace implementation model)

3107:

975:

868:

720:

692:

688:

603:

368:

48:

2568:

2498:

2488:

1608:

BOLT: Optimizing OpenMP Parallel

Regions with User-Level Threads

1256:

1230:

1214:

1178:

1155:

1068:

1037:

Multithreaded programs vs single-threaded programs pros and cons

684:

574:. This can cause problems if a cooperatively multitasked thread

471:

307:

is preemptive. Kernel threads do not own resources except for a

2673:

1904:

1787:"Multi-threading at Business-logic Level is Considered Harmful"

1150:

Many programming languages support threading in some capacity.

991:(SMP) systems to contend for the memory bus, especially if the

3143:

2478:

2455:

1249:

839:

724:

625:

497:

Advantages and disadvantages of threads vs processes include:

487:

183:

Threads made an early appearance under the name of "tasks" in

147:

118:(via multithreading capabilities), sharing resources such as

987:. Both of these may sap performance and force processors in

1028:

A popular programming pattern involving threads is that of

1843:

Bradford

Nichols, Dick Buttlar, Jacqueline Proulx Farell:

1087:

Multithreaded applications have the following drawbacks:

1639:(9th ed.). Hoboken, N.J.: Wiley. pp. 170–171.

114:

The multiple threads of a given process may be executed

53:

A process with two threads of execution, running on one

185:

OS/360 Multiprogramming with a

Variable Number of Tasks

164:

554:

for its finer-grained control over execution time via

1661:"Multithreading in the Solaris Operating Environment"

906:

at a time. In the formal analysis of the variables'

3136:

3073:

3019:

2980:

2915:

2906:

2876:

2823:

2814:

2753:

2707:

2592:

2454:

2294:

2244:

2198:

2162:

2106:

2050:

1999:

1938:

1525:. CPPCON. Archived from the original on 2020-11-25

388:are an even lighter unit of scheduling which are

1542:: CS1 maint: bot: original URL status unknown (

1460:Traffic Control in a Multiplexed Computer System

1158:(F) included support for multithreading (called

452:processes interact only through system-provided

1711:O'Hearn, Peter William; Tennent, R. D. (1997).

1061:being available for obtaining similar results.

510:of threads: unlike processes, which require a

107:. In many cases, a thread is a component of a

31:. For the form of code consisting entirely of

2685:

1916:

1690:. Mike Murach & Associates. p. 512.

486:switch, which on some architectures (notably

211:. This yields a variety of related concepts.

8:

1627:

1625:

1623:

1621:

691:used this approach from the start, while on

864:History of threading models in Unix systems

449:, whereas threads share their address space

2912:

2820:

2692:

2678:

2670:

1923:

1909:

1901:

1635:; Galvin, Peter Baer; Gagne, Greg (2013).

538:Operating systems schedule threads either

266:, sockets, device handles, windows, and a

890:Single-threaded vs multithreaded programs

620:; in 2005, they introduced the dual-core

1433:. Prentice-Hall International Editions.

785:number of application threads onto some

660:system, multiple threads can execute in

59:

1744:"Single-Threading: Back to the Future?"

1466:(Doctor of Science thesis). p. 20.

1373:

1765:

1763:

1687:Murach's CICS for the COBOL Programmer

1535:

566:, or other side-effects. In contrast,

514:or shared memory mechanism to perform

187:(MVT) in 1967. Saltzer (1966) credits

29:Multithreading (computer architecture)

1812:"Operation Costs in CPU Clock Cycles"

344:

331:Threads are sometimes implemented in

7:

1873:Multithreading Applications in Win32

1810:'No Bugs' Hare (12 September 2016).

1112:operations (often implemented using

1092:

534:Preemptive vs cooperative scheduling

508:Simplified sharing and communication

140:differs between operating systems.

1785:Ignatchenko, Sergey (August 2015).

1742:Ignatchenko, Sergey (August 2010).

1684:Menéndez, Raúl; Lowe, Doug (2001).

1185:interface for multithreading, like

998:Other synchronization APIs include

755:uses User-level threading, as does

392:: a running fiber must explicitly "

103:, which is typically a part of the

1326:Multithreading (computer hardware)

1311:Communicating sequential processes

968:application programming interfaces

699:implements this approach (via the

590:Single- vs multi-processor systems

431:processes carry considerably more

347:). User threads as implemented by

136:The implementation of threads and

25:

1887:Unix Internals: the New Frontiers

1200:) programming languages, such as

707:). This approach is also used by

643:(CPU) switches between different

397:

2796:Object-oriented operating system

2653:

2652:

1770:Lee, Edward (January 10, 2006).

1289:

938:Threads and data synchronization

416:Threads differ from traditional

151:

2124:Analysis of parallel algorithms

2806:Supercomputer operating system

1871:Jim Beveridge, Robert Wiener:

1831:Programming with POSIX Threads

1393:IEEE Transactions on Computers

1361:Win32 Thread Information Block

1272:Hardware description languages

1255:In programming models such as

831:used by older versions of the

809:Hybrid implementation examples

1:

2071:Simultaneous and heterogenous

1847:, O'Reilly & Associates,

793::1") threading. In general, "

2781:Just enough operating system

2766:Distributed operating system

2659:Category: Parallel computing

1146:Programming language support

995:of the locking is too fine.

910:and process state, the term

679:1:1 (kernel-level threading)

548:Multi-user operating systems

492:translation lookaside buffer

288:translation lookaside buffer

2894:User space and kernel space

1574:10.1007/978-3-031-15922-0_1

1346:Simultaneous multithreading

1297:Computer programming portal

966:To prevent this, threading

608:simultaneous multithreading

516:inter-process communication

454:inter-process communication

3228:

2801:Real-time operating system

1966:High-performance computing

1772:"The Problem with Threads"

1263:, an array of threads run

1021:

972:synchronization primitives

943:

614:processor, under the name

568:cooperative multithreading

502:Lower resource consumption

378:

280:interprocess communication

218:

26:

2997:Multilevel feedback queue

2992:Fixed-priority preemptive

2776:Hobbyist operating system

2771:Embedded operating system

2648:

2600:Automatic parallelization

2236:Application checkpointing

1637:Operating system concepts

1306:Clone (Linux system call)

1261:data parallel computation

1223:Message Passing Interface

1100:must be careful to avoid

989:symmetric multiprocessing

902:is the processing of one

734::1 (user-level threading)

628:introduced the dual-core

552:preemptive multithreading

18:Thread (computer science)

3040:General protection fault

2791:Network operating system

2745:User features comparison

1431:Modern Operating Systems

1165:Many implementations of

853:Glasgow Haskell Compiler

522:Thread crashes a process

445:processes have separate

381:Fiber (computer science)

290:(TLB), notably on x86).

191:with the term "thread".

2786:Mobile operating system

2615:Embarrassingly parallel

2610:Deterministic algorithm

1859:Java Thread Programming

1506:"Erlang: 3.1 Processes"

1405:10.1109/tc.1979.1675439

1246:global interpreter lock

855:(GHC) for the language

641:central processing unit

390:cooperatively scheduled

335:libraries, thus called

225:At the kernel level, a

41:Thread (disambiguation)

2889:Loadable kernel module

2330:Associative processing

2286:Non-blocking algorithm

2092:Clustered multi-thread

1666:. 2002. Archived from

1455:Saltzer, Jerome Howard

1331:Non-blocking algorithm

1321:Multi-core (computing)

916:functional programming

874:light-weight processes

829:Light-weight processes

630:Athlon 64 X2

84:

57:

39:. For other uses, see

2957:Process control block

2923:Computer multitasking

2761:Disk operating system

2446:Hardware acceleration

2359:Superscalar processor

2349:Dataflow architecture

1946:Distributed computing

1673:on February 26, 2009.

1633:Silberschatz, Abraham

1519:Ignatchenko, Sergey.

1316:Computer multitasking

1139:Synchronization costs

1081:large number of cores

815:Scheduler activations

268:process control block

229:contains one or more

124:dynamically allocated

63:

52:

3207:Concurrent computing

3128:Virtual tape library

2720:Forensic engineering

2325:Pipelined processing

2274:Explicit parallelism

2269:Implicit parallelism

2259:Dataflow programming

1845:Pthreads Programming

1714:ALGOL-like languages

1427:Tanenbaum, Andrew S.

1077:parallel across data

896:computer programming

845:The OS for the Tera-

753:GNU Portable Threads

412:Threads vs processes

321:thread-local storage

249:light-weight process

133:at any given time.

3212:Threads (computing)

3137:Supporting concepts

3123:Virtual file system

2549:Parallel Extensions

2354:Pipelined processor

1829:David R. Butenhof:

1351:Thread pool pattern

1124:over resources. As

1024:Thread pool pattern

1000:condition variables

221:Process (computing)

189:Victor A. Vyssotsky

3060:Segmentation fault

2908:Process management

2423:Massively parallel

2401:distributed shared

2221:Cache invalidation

2185:Instruction window

1976:Manycore processor

1956:Massively parallel

1951:Parallel computing

1932:Parallel computing

1875:, Addison-Wesley,

1833:, Addison-Wesley,

1487:Dr. Dobb's Journal

1384:(September 1979).

1336:Priority inversion

1110:mutually exclusive

924:parallel execution

803:priority inversion

770:(hybrid threading)

606:added support for

564:priority inversion

163:. You can help by

126:variables and non-

85:

58:

3194:

3193:

3050:Memory protection

3021:Memory management

3015:

3014:

3007:Shortest job next

2902:

2901:

2701:Operating systems

2667:

2666:

2620:Parallel slowdown

2254:Stream processing

2144:Karp–Flatt metric

1889:, Prentice Hall,

1728:978-0-8176-3937-2

1719:Birkhäuser Verlag

1697:978-1-890774-09-7

1583:978-3-031-15921-3

1004:critical sections

649:context switching

572:run to completion

556:context switching

474:are said to have

460:context switching

424:in several ways:

420:operating-system

284:context switching

276:process isolation

181:

180:

82:Context Switching

16:(Redirected from

3219:

3149:Computer network

2913:

2821:

2694:

2687:

2680:

2671:

2656:

2655:

2630:Software lockout

2429:Computer cluster

2364:Vector processor

2319:Array processing

2304:Flynn's taxonomy

2211:Memory coherence

1986:Computer network

1925:

1918:

1911:

1902:

1816:

1815:

1807:

1801:

1800:

1782:

1776:

1775:

1767:

1758:

1757:

1739:

1733:

1732:

1708:

1702:

1701:

1681:

1675:

1674:

1672:

1665:

1657:

1651:

1650:

1629:

1616:

1615:

1613:

1602:

1596:

1595:

1563:

1554:

1548:

1547:

1541:

1533:

1531:

1530:

1516:

1510:

1509:

1502:

1496:

1495:

1474:

1468:

1467:

1465:

1451:

1445:

1444:

1423:

1417:

1416:

1390:

1378:

1299:

1294:

1293:

1133:Being untestable

1055:non-blocking I/O

912:single threading

900:single-threading

871:4.x implemented

838:Marcel from the

835:operating system

674:Threading models

667:hardware threads

645:software threads

600:context switches

596:hardware threads

578:by waiting on a

550:generally favor

466:Systems such as

351:are also called

349:virtual machines

311:, a copy of the

270:. Processes are

199:Related concepts

176:

173:

155:

148:

131:global variables

105:operating system

89:computer science

21:

3227:

3226:

3222:

3221:

3220:

3218:

3217:

3216:

3197:

3196:

3195:

3190:

3132:

3093:Defragmentation

3078:

3069:

3055:Protection ring

3024:

3011:

2983:

2976:

2898:

2872:

2810:

2749:

2703:

2698:

2668:

2663:

2644:

2588:

2494:Coarray Fortran

2450:

2434:Beowulf cluster

2290:

2240:

2231:Synchronization

2216:Cache coherence

2206:Multiprocessing

2194:

2158:

2139:Cost efficiency

2134:Gustafson's law

2102:

2046:

1995:

1971:Multiprocessing

1961:Cloud computing

1934:

1929:

1899:

1885:Uresh Vahalia:

1825:

1823:Further reading

1820:

1819:

1809:

1808:

1804:

1784:

1783:

1779:

1769:

1768:

1761:

1741:

1740:

1736:

1729:

1721:. p. 157.

1717:. Vol. 2.

1710:

1709:

1705:

1698:

1683:

1682:

1678:

1670:

1663:

1659:

1658:

1654:

1647:

1631:

1630:

1619:

1611:

1604:

1603:

1599:

1584:

1561:

1556:

1555:

1551:

1534:

1528:

1526:

1518:

1517:

1513:

1504:

1503:

1499:

1476:

1475:

1471:

1463:

1453:

1452:

1448:

1441:

1425:

1424:

1420:

1388:

1382:Lamport, Leslie

1380:

1379:

1375:

1370:

1365:

1295:

1288:

1285:

1148:

1120:, livelocks or

1102:race conditions

1093:Synchronization

1065:Parallelization

1039:

1026:

1020:

960:race conditions

948:

940:

928:multiprocessing

892:

866:

811:

788:

784:

772:

748:multi-processor

736:

681:

676:

617:hyper-threading

592:

536:

531:

512:message passing

490:) results in a

414:

383:

377:

341:multi-processor

329:

317:program counter

296:

223:

217:

201:

177:

171:

168:

161:needs expansion

146:

72:

47:

44:

23:

22:

15:

12:

11:

5:

3225:

3223:

3215:

3214:

3209:

3199:

3198:

3192:

3191:

3189:

3188:

3183:

3182:

3181:

3179:User interface

3176:

3166:

3161:

3156:

3151:

3146:

3140:

3138:

3134:

3133:

3131:

3130:

3125:

3120:

3115:

3110:

3105:

3103:File attribute

3100:

3095:

3090:

3084:

3082:

3071:

3070:

3068:

3067:

3065:Virtual memory

3062:

3057:

3052:

3047:

3042:

3037:

3031:

3029:

3017:

3016:

3013:

3012:

3010:

3009:

3004:

2999:

2994:

2988:

2986:

2978:

2977:

2975:

2974:

2969:

2964:

2959:

2954:

2949:

2944:

2939:

2937:Context switch

2934:

2919:

2917:

2910:

2904:

2903:

2900:

2899:

2897:

2896:

2891:

2886:

2880:

2878:

2874:

2873:

2871:

2870:

2865:

2860:

2855:

2850:

2845:

2840:

2835:

2829:

2827:

2818:

2812:

2811:

2809:

2808:

2803:

2798:

2793:

2788:

2783:

2778:

2773:

2768:

2763:

2757:

2755:

2751:

2750:

2748:

2747:

2742:

2737:

2732:

2727:

2722:

2717:

2711:

2709:

2705:

2704:

2699:

2697:

2696:

2689:

2682:

2674:

2665:

2664:

2662:

2661:

2649:

2646:

2645:

2643:

2642:

2637:

2632:

2627:

2625:Race condition

2622:

2617:

2612:

2607:

2602:

2596:

2594:

2590:

2589:

2587:

2586:

2581:

2576:

2571:

2566:

2561:

2556:

2551:

2546:

2541:

2536:

2531:

2526:

2521:

2516:

2511:

2506:

2501:

2496:

2491:

2486:

2481:

2476:

2471:

2466:

2460:

2458:

2452:

2451:

2449:

2448:

2443:

2438:

2437:

2436:

2426:

2420:

2419:

2418:

2413:

2408:

2403:

2398:

2393:

2383:

2382:

2381:

2376:

2369:Multiprocessor

2366:

2361:

2356:

2351:

2346:

2345:

2344:

2339:

2334:

2333:

2332:

2327:

2322:

2311:

2300:

2298:

2292:

2291:

2289:

2288:

2283:

2282:

2281:

2276:

2271:

2261:

2256:

2250:

2248:

2242:

2241:

2239:

2238:

2233:

2228:

2223:

2218:

2213:

2208:

2202:

2200:

2196:

2195:

2193:

2192:

2187:

2182:

2177:

2172:

2166:

2164:

2160:

2159:

2157:

2156:

2151:

2146:

2141:

2136:

2131:

2126:

2121:

2116:

2110:

2108:

2104:

2103:

2101:

2100:

2098:Hardware scout

2095:

2089:

2084:

2079:

2073:

2068:

2062:

2056:

2054:

2052:Multithreading

2048:

2047:

2045:

2044:

2039:

2034:

2029:

2024:

2019:

2014:

2009:

2003:

2001:

1997:

1996:

1994:

1993:

1991:Systolic array

1988:

1983:

1978:

1973:

1968:

1963:

1958:

1953:

1948:

1942:

1940:

1936:

1935:

1930:

1928:

1927:

1920:

1913:

1905:

1898:

1897:

1883:

1869:

1855:

1841:

1826:

1824:

1821:

1818:

1817:

1802:

1777:

1774:. UC Berkeley.

1759:

1734:

1727:

1703:

1696:

1676:

1652:

1645:

1617:

1597:

1582:

1549:

1511:

1497:

1480:(March 2005).

1469:

1446:

1439:

1418:

1399:(9): 690–691.

1372:

1371:

1369:

1366:

1364:

1363:

1358:

1353:

1348:

1343:

1338:

1333:

1328:

1323:

1318:

1313:

1308:

1302:

1301:

1300:

1284:

1281:

1280:

1279:

1269:

1253:

1234:

1210:.NET Framework

1198:cross-platform

1190:

1163:

1147:

1144:

1143:

1142:

1136:

1130:

1085:

1084:

1062:

1046:Responsiveness

1038:

1035:

1022:Main article:

1019:

1016:

944:Main article:

939:

936:

891:

888:

865:

862:

861:

860:

849:

843:

836:

826:

810:

807:

786:

782:

771:

761:

746:processors or

735:

729:

680:

677:

675:

672:

654:multiprocessor

624:processor and

622:Pentium D

612:Pentium 4

591:

588:

535:

532:

530:

527:

526:

525:

519:

505:

464:

463:

457:

450:

447:address spaces

443:

429:

413:

410:

379:Main article:

376:

373:

328:

325:

315:including the

295:

294:Kernel threads

292:

239:(user) threads

235:runtime system

231:kernel threads

219:Main article:

216:

213:

200:

197:

179:

178:

158:

156:

145:

142:

45:

24:

14:

13:

10:

9:

6:

4:

3:

2:

3224:

3213:

3210:

3208:

3205:

3204:

3202:

3187:

3184:

3180:

3177:

3175:

3172:

3171:

3170:

3167:

3165:

3162:

3160:

3157:

3155:

3152:

3150:

3147:

3145:

3142:

3141:

3139:

3135:

3129:

3126:

3124:

3121:

3119:

3116:

3114:

3111:

3109:

3106:

3104:

3101:

3099:

3096:

3094:

3091:

3089:

3086:

3085:

3083:

3081:

3076:

3072:

3066:

3063:

3061:

3058:

3056:

3053:

3051:

3048:

3046:

3045:Memory paging

3043:

3041:

3038:

3036:

3033:

3032:

3030:

3027:

3022:

3018:

3008:

3005:

3003:

3000:

2998:

2995:

2993:

2990:

2989:

2987:

2985:

2979:

2973:

2970:

2968:

2965:

2963:

2960:

2958:

2955:

2953:

2950:

2948:

2945:

2943:

2940:

2938:

2935:

2932:

2928:

2924:

2921:

2920:

2918:

2914:

2911:

2909:

2905:

2895:

2892:

2890:

2887:

2885:

2884:Device driver

2882:

2881:

2879:

2875:

2869:

2866:

2864:

2861:

2859:

2856:

2854:

2851:

2849:

2846:

2844:

2841:

2839:

2836:

2834:

2831:

2830:

2828:

2826:

2825:Architectures

2822:

2819:

2817:

2813:

2807:

2804:

2802:

2799:

2797:

2794:

2792:

2789:

2787:

2784:

2782:

2779:

2777:

2774:

2772:

2769:

2767:

2764:

2762:

2759:

2758:

2756:

2752:

2746:

2743:

2741:

2738:

2736:

2733:

2731:

2728:

2726:

2723:

2721:

2718:

2716:

2713:

2712:

2710:

2706:

2702:

2695:

2690:

2688:

2683:

2681:

2676:

2675:

2672:

2660:

2651:

2650:

2647:

2641:

2638:

2636:

2633:

2631:

2628:

2626:

2623:

2621:

2618:

2616:

2613:

2611:

2608:

2606:

2603:

2601:

2598:

2597:

2595:

2591:

2585:

2582:

2580:

2577:

2575:

2572:

2570:

2567:

2565:

2562:

2560:

2557:

2555:

2552:

2550:

2547:

2545:

2542:

2540:

2537:

2535:

2532:

2530:

2527:

2525:

2522:

2520:

2517:

2515:

2514:Global Arrays

2512:

2510:

2507:

2505:

2502:

2500:

2497:

2495:

2492:

2490:

2487:

2485:

2482:

2480:

2477:

2475:

2472:

2470:

2467:

2465:

2462:

2461:

2459:

2457:

2453:

2447:

2444:

2442:

2441:Grid computer

2439:

2435:

2432:

2431:

2430:

2427:

2424:

2421:

2417:

2414:

2412:

2409:

2407:

2404:

2402:

2399:

2397:

2394:

2392:

2389:

2388:

2387:

2384:

2380:

2377:

2375:

2372:

2371:

2370:

2367:

2365:

2362:

2360:

2357:

2355:

2352:

2350:

2347:

2343:

2340:

2338:

2335:

2331:

2328:

2326:

2323:

2320:

2317:

2316:

2315:

2312:

2310:

2307:

2306:

2305:

2302:

2301:

2299:

2297:

2293:

2287:

2284:

2280:

2277:

2275:

2272:

2270:

2267:

2266:

2265:

2262:

2260:

2257:

2255:

2252:

2251:

2249:

2247:

2243:

2237:

2234:

2232:

2229:

2227:

2224:

2222:

2219:

2217:

2214:

2212:

2209:

2207:

2204:

2203:

2201:

2197:

2191:

2188:

2186:

2183:

2181:

2178:

2176:

2173:

2171:

2168:

2167:

2165:

2161:

2155:

2152:

2150:

2147:

2145:

2142:

2140:

2137:

2135:

2132:

2130:

2127:

2125:

2122:

2120:

2117:

2115:

2112:

2111:

2109:

2105:

2099:

2096:

2093:

2090:

2088:

2085:

2083:

2080:

2077:

2074:

2072:

2069:

2066:

2063:

2061:

2058:

2057:

2055:

2053:

2049:

2043:

2040:

2038:

2035:

2033:

2030:

2028:

2025:

2023:

2020:

2018:

2015:

2013:

2010:

2008:

2005:

2004:

2002:

1998:

1992:

1989:

1987:

1984:

1982:

1979:

1977:

1974:

1972:

1969:

1967:

1964:

1962:

1959:

1957:

1954:

1952:

1949:

1947:

1944:

1943:

1941:

1937:

1933:

1926:

1921:

1919:

1914:

1912:

1907:

1906:

1903:

1896:

1895:0-13-101908-2

1892:

1888:

1884:

1882:

1881:0-201-44234-5

1878:

1874:

1870:

1868:

1867:0-672-31585-8

1864:

1860:

1856:

1854:

1853:1-56592-115-1

1850:

1846:

1842:

1840:

1839:0-201-63392-2

1836:

1832:

1828:

1827:

1822:

1813:

1806:

1803:

1798:

1794:

1793:

1788:

1781:

1778:

1773:

1766:

1764:

1760:

1755:

1751:

1750:

1745:

1738:

1735:

1730:

1724:

1720:

1716:

1715:

1707:

1704:

1699:

1693:

1689:

1688:

1680:

1677:

1669:

1662:

1656:

1653:

1648:

1646:9781118063330

1642:

1638:

1634:

1628:

1626:

1624:

1622:

1618:

1610:

1609:

1601:

1598:

1593:

1589:

1585:

1579:

1575:

1571:

1567:

1560:

1553:

1550:

1545:

1539:

1538:cite AV media

1524:

1523:

1515:

1512:

1507:

1501:

1498:

1493:

1489:

1488:

1483:

1479:

1473:

1470:

1462:

1461:

1457:(July 1966).

1456:

1450:

1447:

1442:

1440:0-13-595752-4

1436:

1432:

1428:

1422:

1419:

1414:

1410:

1406:

1402:

1398:

1394:

1387:

1383:

1377:

1374:

1367:

1362:

1359:

1357:

1356:Thread safety

1354:

1352:

1349:

1347:

1344:

1342:

1339:

1337:

1334:

1332:

1329:

1327:

1324:

1322:

1319:

1317:

1314:

1312:

1309:

1307:

1304:

1303:

1298:

1292:

1287:

1282:

1277:

1273:

1270:

1268:architecture.

1266:

1265:the same code

1262:

1259:designed for

1258:

1254:

1251:

1247:

1243:

1239:

1235:

1232:

1228:

1224:

1220:

1216:

1211:

1207:

1203:

1199:

1196:(and usually

1195:

1191:

1188:

1184:

1180:

1176:

1175:POSIX Threads

1172:

1168:

1164:

1161:

1157:

1153:

1152:

1151:

1145:

1140:

1137:

1134:

1131:

1127:

1126:Edward A. Lee

1123:

1119:

1115:

1111:

1107:

1103:

1099:

1095:

1094:

1090:

1089:

1088:

1082:

1078:

1074:

1070:

1066:

1063:

1060:

1056:

1051:

1050:worker thread

1047:

1044:

1043:

1042:

1036:

1034:

1031:

1025:

1017:

1015:

1013:

1009:

1005:

1001:

996:

994:

990:

986:

981:

977:

973:

970:(APIs) offer

969:

964:

961:

957:

953:

947:

946:Thread safety

942:

937:

935:

931:

929:

925:

919:

917:

913:

909:

905:

901:

897:

889:

887:

884:

883:DragonFly BSD

880:

876:

875:

870:

863:

858:

854:

850:

848:

844:

841:

837:

834:

830:

827:

824:

820:

816:

813:

812:

808:

806:

804:

800:

796:

792:

780:

776:

769:

765:

762:

760:

758:

757:State Threads

754:

749:

745:

744:multithreaded

741:

733:

730:

728:

726:

722:

718:

714:

710:

706:

702:

698:

697:GNU C Library

694:

690:

686:

678:

673:

671:

669:

668:

663:

659:

655:

650:

646:

642:

638:

633:

631:

627:

623:

619:

618:

613:

609:

605:

601:

597:

589:

587:

585:

581:

577:

573:

569:

565:

561:

557:

553:

549:

545:

544:cooperatively

541:

533:

528:

523:

520:

517:

513:

509:

506:

503:

500:

499:

498:

495:

494:(TLB) flush.

493:

489:

485:

484:address-space

481:

477:

473:

469:

461:

458:

455:

451:

448:

444:

442:

438:

434:

430:

427:

426:

425:

423:

419:

411:

409:

407:

403:

399:

395:

391:

387:

382:

374:

372:

371:primitives).

370:

364:

360:

356:

354:

353:green threads

350:

346:

342:

338:

334:

326:

324:

322:

318:

314:

310:

306:

301:

300:kernel thread

293:

291:

289:

285:

281:

277:

273:

269:

265:

261:

257:

252:

250:

246:

245:

240:

236:

232:

228:

222:

214:

212:

210:

209:cooperatively

206:

198:

196:

192:

190:

186:

175:

172:February 2021

166:

162:

159:This section

157:

154:

150:

149:

143:

141:

139:

134:

132:

129:

125:

121:

117:

112:

110:

106:

102:

98:

94:

90:

83:

79:

75:

70:

66:

62:

56:

51:

42:

38:

37:Threaded code

34:

30:

19:

3080:file systems

2972:Time-sharing

2966:

2199:Coordination

2174:

2129:Amdahl's law

2065:Simultaneous

1886:

1872:

1858:

1844:

1830:

1805:

1790:

1780:

1747:

1737:

1713:

1706:

1686:

1679:

1668:the original

1655:

1636:

1607:

1600:

1565:

1552:

1527:. Retrieved

1521:

1514:

1500:

1491:

1485:

1478:Sutter, Herb

1472:

1459:

1449:

1430:

1421:

1396:

1392:

1376:

1341:Protothreads

1194:higher level

1160:multitasking

1159:

1149:

1138:

1132:

1091:

1086:

1064:

1059:Unix signals

1049:

1045:

1040:

1030:thread pools

1027:

1018:Thread pools

997:

965:

949:

941:

932:

920:

911:

899:

893:

872:

867:

822:

818:

798:

794:

790:

778:

774:

773:

767:

763:

739:

737:

731:

705:LinuxThreads

682:

665:

644:

637:time slicing

634:

615:

593:

540:preemptively

537:

521:

507:

501:

496:

479:

478:threads and

475:

465:

418:multitasking

415:

398:user threads

384:

365:

361:

357:

337:user threads

336:

330:

327:User threads

299:

297:

271:

264:file handles

255:

253:

242:

238:

230:

226:

224:

205:preemptively

202:

193:

182:

169:

165:adding to it

160:

135:

128:thread-local

116:concurrently

113:

92:

86:

3098:Device file

3088:Boot loader

3002:Round-robin

2927:Cooperative

2863:Rump kernel

2853:Multikernel

2843:Microkernel

2740:Usage share

2635:Scalability

2396:distributed

2279:Concurrency

2246:Programming

2087:Cooperative

2076:Speculative

2012:Instruction

1857:Paul Hyde:

1187:beginthread

993:granularity

918:community.

632:processor.

602:. In 2002,

560:lock convoy

71:vs. Thread

35:calls, see

3201:Categories

3028:protection

2984:algorithms

2982:Scheduling

2931:Preemptive

2877:Components

2848:Monolithic

2715:Comparison

2640:Starvation

2379:asymmetric

2114:PRAM model

2082:Preemptive

1529:2020-11-24

1368:References

1240:for Ruby,

1106:rendezvous

1098:programmer

1008:semaphores

881:2.x+, and

847:Cray MTA-2

781:maps some

658:multi-core

529:Scheduling

468:Windows NT

456:mechanisms

439:and other

406:coroutines

343:machines (

78:Preemption

74:Scheduling

33:subroutine

3118:Partition

3035:Bus error

2962:Real-time

2942:Interrupt

2868:Unikernel

2833:Exokernel

2374:symmetric

2119:PEM model

1592:251692327

1183:process.h

1118:deadlocks

908:semantics

877:or LWPs.

703:or older

582:or if it

480:expensive

441:resources

422:processes

345:M:N model

333:userspace

313:registers

305:scheduler

260:resources

215:Processes

138:processes

101:scheduler

97:execution

55:processor

3164:Live USB

3026:resource

2916:Concepts

2754:Variants

2735:Timeline

2605:Deadlock

2593:Problems

2559:pthreads

2539:OpenHMPP

2464:Ateji PX

2425:computer

2296:Hardware

2163:Elements

2149:Slowdown

2060:Temporal

2042:Pipeline

1861:, Sams,

1792:Overload

1756:: 16–19.

1749:Overload

1429:(1992).

1283:See also

1274:such as

1238:Ruby MRI

1227:Ateji PX

1012:monitors

985:spinlock

974:such as

930:system.

842:project.

662:parallel

580:resource

272:isolated

3159:Live CD

3113:Journal

3077:access,

3075:Storage

2952:Process

2858:vkernel

2725:History

2708:General

2564:RaftLib

2544:OpenACC

2519:GPUOpen

2509:C++ AMP

2484:Charm++

2226:Barrier

2170:Process

2154:Speedup

1939:General

1795:(128).

1413:5679366

1276:Verilog

1242:CPython

1114:mutexes

1057:and/or

976:mutexes

904:command

857:Haskell

833:Solaris

717:FreeBSD

709:Solaris

647:. This

610:to the

584:starves

256:process

227:process

144:History

109:process

69:Process

65:Program

2967:Thread

2838:Hybrid

2816:Kernel

2657:

2534:OpenCL

2529:OpenMP

2474:Chapel

2391:shared

2386:Memory

2321:(SIMT)

2264:Models

2175:Thread

2107:Theory

2078:(SpMT)

2032:Memory

2017:Thread

2000:Levels

1893:

1879:

1865:

1851:

1837:

1799:: 4–7.

1752:(97).

1725:

1694:

1643:

1590:

1580:

1437:

1411:

1219:OpenMP

1208:, and

1206:Python

1073:OpenCL

1010:, and

952:couple

879:NetBSD

723:, and

713:NetBSD

639:: the

576:blocks

437:memory

402:OpenMP

386:Fibers

375:Fibers

319:, and

244:fibers

120:memory

93:thread

3169:Shell

3108:Inode

2504:Dryad

2469:Boost

2190:Array

2180:Fiber

2094:(CMT)

2067:(SMT)

1981:GPGPU

1671:(PDF)

1664:(PDF)

1612:(PDF)

1588:S2CID

1562:(PDF)

1464:(PDF)

1409:S2CID

1389:(PDF)

1192:Some

1122:races

1079:on a

926:on a

869:SunOS

721:macOS

693:Linux

689:Win32

604:Intel

476:cheap

433:state

394:yield

369:await

309:stack

2730:List

2569:ROCm

2499:CUDA

2489:Cilk

2456:APIs

2416:COMA

2411:NUMA

2342:MIMD

2337:MISD

2314:SIMD

2309:SISD

2037:Loop

2027:Data

2022:Task

1891:ISBN

1877:ISBN

1863:ISBN

1849:ISBN

1835:ISBN

1797:ACCU

1754:ACCU

1723:ISBN

1692:ISBN

1641:ISBN

1578:ISBN

1544:link

1494:(3).

1435:ISBN

1397:C-28

1257:CUDA

1231:CUDA

1215:Cilk

1202:Java

1179:Unix

1169:and

1156:PL/I

1154:IBM

1071:and

1069:CUDA

980:lock

851:The

701:NPTL

695:the

687:and

685:OS/2

472:OS/2

470:and

91:, a

67:vs.

3186:PXE

3174:CLI

3154:HAL

3144:API

2947:IPC

2584:ZPL

2579:TBB

2574:UPC

2554:PVM

2524:MPI

2479:HPX

2406:UMA

2007:Bit

1570:doi

1401:doi

1250:Tcl

1171:C++

978:to

956:IPC

894:In

840:PM2

738:An

725:iOS

656:or

626:AMD

542:or

488:x86

274:by

207:or

167:.

111:.

95:of

87:In

3203::

2929:,

1789:.

1762:^

1746:.

1620:^

1586:.

1576:.

1564:.

1540:}}

1536:{{

1492:30

1490:.

1484:.

1407:.

1395:.

1391:.

1233:).

1229:,

1221:,

1217:,

1204:,

1014:.

1006:,

1002:,

898:,

759:.

727:.

719:,

715:,

711:,

562:,

546:.

355:.

298:A

254:A

80:,

76:,

3023:,

2933:)

2925:(

2693:e

2686:t

2679:v

1924:e

1917:t

1910:v

1814:.

1731:.

1700:.

1649:.

1594:.

1572::

1546:)

1532:.

1508:.

1443:.

1415:.

1403::

1213:(

1189:.

1167:C

823:N

821::

819:M

799:N

797::

795:M

791:N

787:N

783:M

779:N

777::

775:M

768:N

766::

764:M

740:M

732:M

174:)

170:(

43:.

20:)

Text is available under the Creative Commons Attribution-ShareAlike License. Additional terms may apply.